A few weeks ago my coworker Jess asked me for some help setting up Claude Code to access a handful of MCP servers. She wanted to give Claude access to the tools she used to do her job: Jira, Pylon, Notion, GitHub, and Fathom, for example.

We figured out how to add the various MCPs and once Jess had them installed I played around with them a bit too. The results hit me like the guy in the old Maxell cassette tape commercials: I was blown away.

In one short sentence I could ask Claude to read a Jira ticket, write the code, add tests, and open a PR, or summarize the tickets assigned to me that I hadn't commented on in more than a day, or tell me why a given customer instance was performing poorly. These are things that I've spent my career doing manually (or automating with scripts if they got too annoying). But now the barrier for writing those scripts was non-existent, and Claude could also do whatever was asked of it with access to all of our various tools (we have BAAs with Anthropic and all of our tool vendors; without that none of this would be possible in a healthcare context).

Since then I’ve been on a journey at Canvas through various stages of agent-based programming:

- I started out using Claude Code directly to give context on parts of our codebase for PR reviews and generate new code for features or bug fixes

- using MCPs to add context from our various tools in service of step 1, and also using Claude for new purposes such as managing my time

- packaging MCP definitions and skills for other people to use (Support Stack)

- using the Claude Agent SDK + Slack to build an agent for other people to use (Investigator)

Support Stack

Jess and I aligned on a goal: take this massive new set of capabilities and use them to make it easier for our support staff to investigate issues. We decided on an achievable goal: a git repository Canvas staff could clone locally, filled with the correct MCP definitions and a couple of skills to aid Claude with helpful context and instructions on how to do an investigation. This was step three. The first time I tried it a customer had just asked a question. I gave it to Support Stack to try answering. It not only pulled data from our disparate sources (GitHub, Pylon, Sentry), it also found the bug and offered to draft a fix (which I took it up on and then shipped to the customer).

In short, it worked. We released the first version of "Support Stack" internally and our support staff immediately picked it up. We didn’t stop there: we iterated on context when Claude got confused or offered output that wasn't helpful. One example: It blamed a customer plugin for performance issues in a way that wasn’t plausible (the plugin had no way to affect the performance of the instance, it was just correlated in time to the performance issues). We addressed each mistake by adding additional context and it started saving us serious time. Support questions that would have taken hours to answer and involved multiple people were completed in minutes. We saved engineering time: often support would get stuck and have to wait for an engineer to find a root cause or validate whether a feature was working like it was supposed to. And we saved support time, because it's hard to research all of these sources manually, and nearly impossible for one person to carry all the context needed to navigate a complex issue through to completion in a system as large as ours.

Investigator

Support Stack proved there was real benefit to agent-based investigation, and it gave me the idea to do the same for our automated alerts. With the Claude Agent SDK I could automatically investigate any alert that came in from our automated monitoring. In the same way that we gave Support Stack access to MCPs, I gave my nascent agent access to tools via APIs. Much of the implementation was the same as Support Stack: local access to code and tools to search it, access to GitHub for searching or creating PRs, access to Sentry, ElasticSearch, and InfluxDB for detailed data about errors, logs, and metrics. I also built some internal APIs to give Investigator context about our applications and our customers’ plugins. Plus some glue to prompt the agent to investigate any new alert that made its way to Slack. These investigations were immediately useful--they provided context far beyond "high CPU usage" or "high memory usage"--Investigator could look at recent web requests and determine what the actual cause was, or see that a customer had uploaded a new version of a plugin immediately before memory usage tripled.

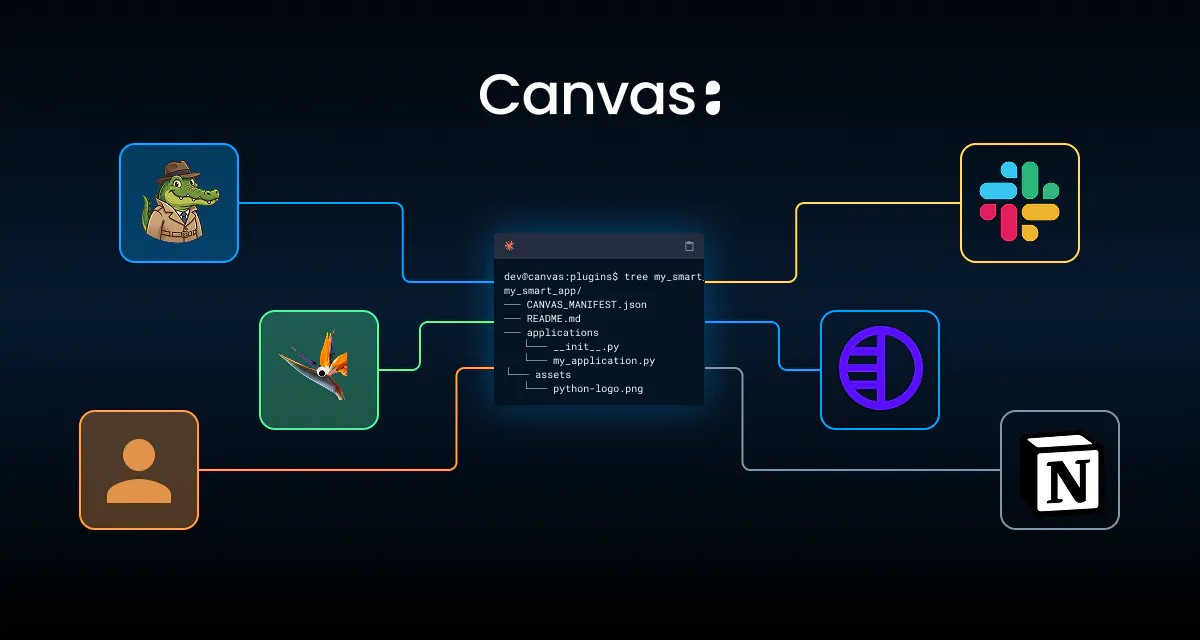

We gave it a name, Investigator, and an alligator avatar (it’s the investi-gator after all).

Naturally I wanted to continue asking questions of Investigator in Slack, inside the alert threads. I added the ability for Investigator to respond when people mentioned it. As soon as I had that feature done I realized Investigator was going to be useful outside the context of alerts--I could just mention it in any Slack channel and it would respond in a thread. This led to an explosion in tools and context (see the appendix) that continually increased the ability to answer a wider and wider range of questions. If asked, Investigator can search Notion and summarize our company values, tell us the key contacts for any customer or the last time we met with them (and link to the meeting transcript), and perform an increasing range of support tasks that our support team used to have to ask an engineer to do. Much of our usage of Support Stack in the terminal moved to Slack where we could just mention Investigator and get an immediate response.

Slack is a natural place for this capability to compound. Investigator will respond to DMs but it's so much more useful if people talk to it in public. Everyone benefits from the answers, and people can see what its capabilities are and expand their own understanding of them. Often I'm watching the output to ensure it is high quality, and, if it isn't, I'll ask Investigator to open a PR to its own code with a change to the context or system prompt. We also built a thumbs up/thumbs down button into each response so I can go back and look at any that were exemplary or missed the mark.

The Future

I’ve been a pragmatist my whole career. If there’s value, I want to capture it and use it to find efficiency for myself and my coworkers... and we have found an unreasonable amount of value in from-scratch, purpose-built applications of agents at Canvas. This isn't really a product you can buy. The most productive uses of this technology are fully custom. Skills and context specific to a given role or company. MCPs or APIs built to give an agent company- or department-specific context.

My next step is building a world model for Canvas that understands and updates our customer relationships, in-flight features, strategy, and more... and that proactively reaches out to humans when context is missing. I’ve already started building it. I got a DM this morning because it couldn't figure out which instance belonged to a specific customer, and it correctly figured out that I was likely to know the answer. So it just messaged me, and then updated its database with my answer. A year ago that missing data would have sat for weeks or months until someone noticed and updated it. Now we have an agent that notices those gaps immediately and just… asks.

I'd love to know if you or your company are also building things like this. The claude-agent-sdk module is extremely powerful but also has some sharp edges to watch out for. If you've read this far and want to build something similar (or have already started) reach out and I'll share a context file you can give your own agent harness so it can avoid those pain points and build the best agent possible while skipping the early mistakes I made.